MAT224H1 Lecture : Isomorphisms, Linear Operators, Eigenvalues and Eigenvectors

Document Summary

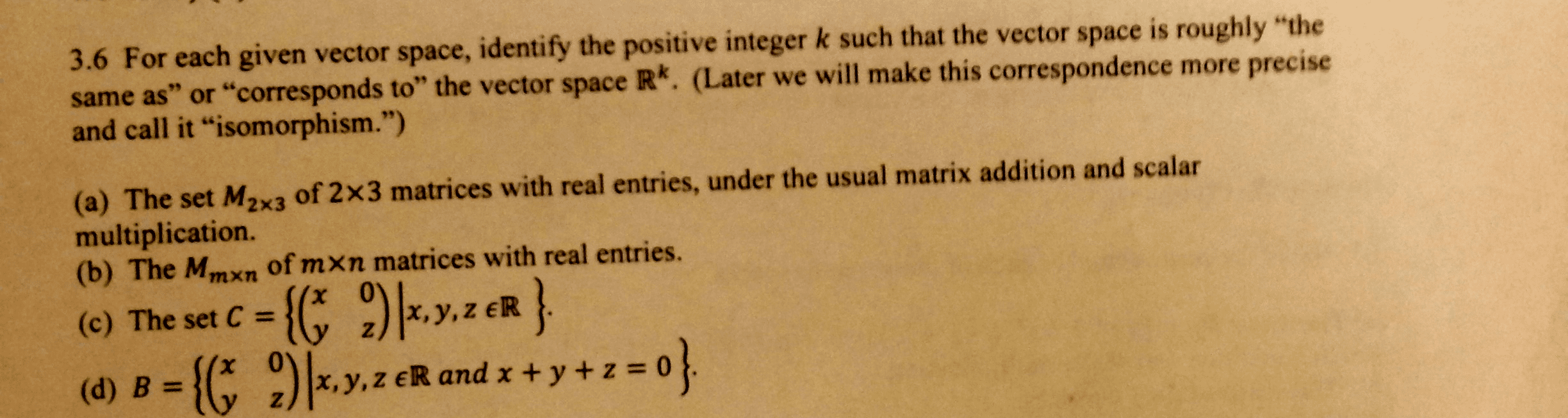

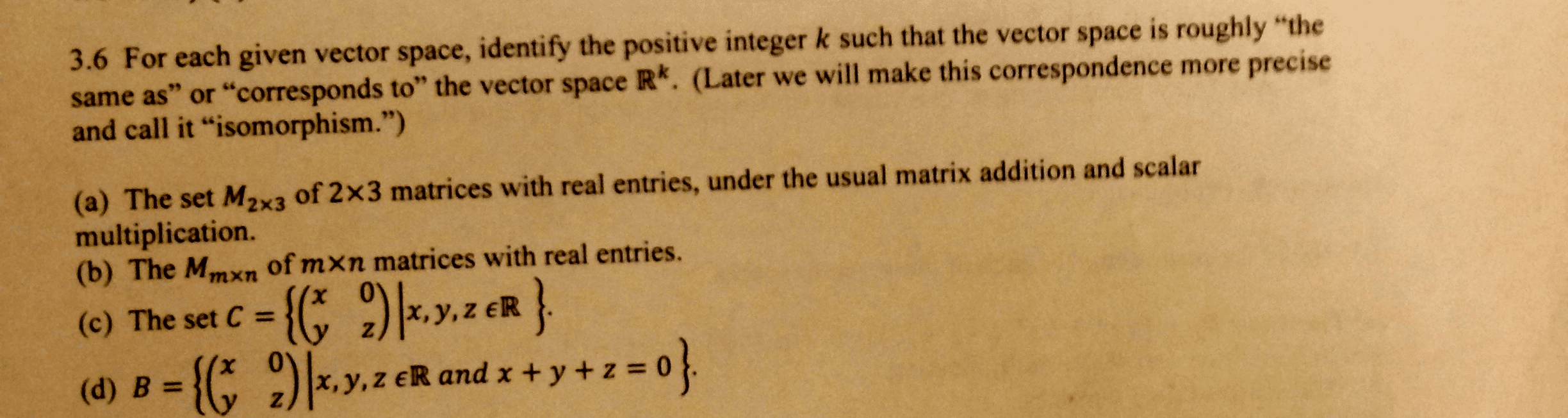

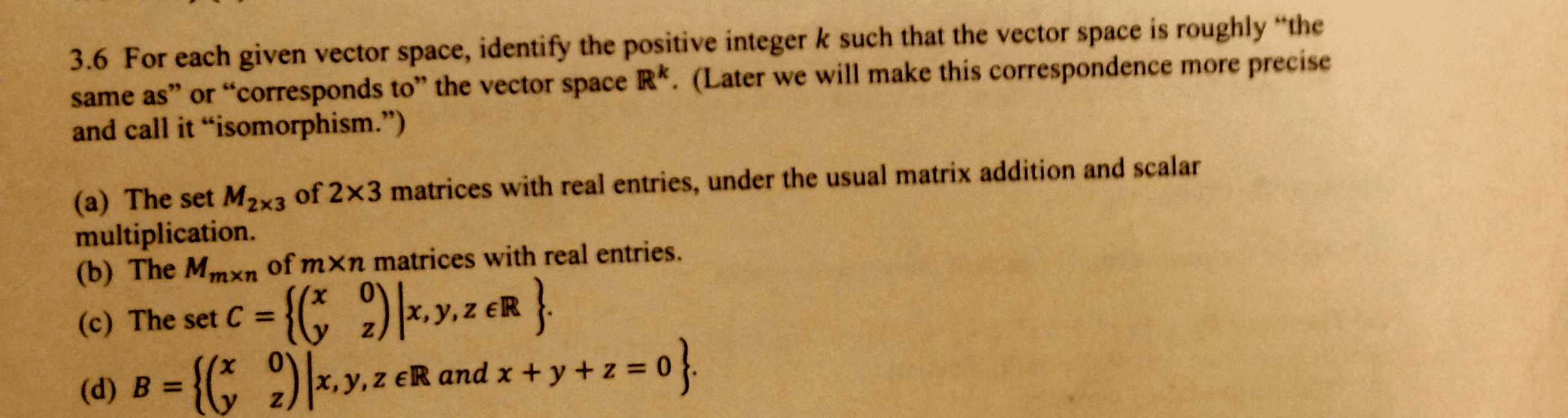

As far as vector space structure goes, this is enough in an abstract vector space all we can do is add vectors and multiply them by scalars. The technical word for having vector spaces, which are the same is having. Vectors spaces v and w over the same eld k are called iso- morphic if there exists an invertible linear transformation t : v w . Such a transformation is called an isomorphism of v and w . Example: pn(k) is isomorphic to k n+1 with the isomorphism t (a0 + a1x + . We can generalize this example and prove the following theorem: If dim v = n, then v is isomorphic to k n. , vn) of v and let t : v k n be the transformation that sends each vector to its coordinates relative to a: t (v) = This transformation is linear (exercise) and is invertible: its inverse is.