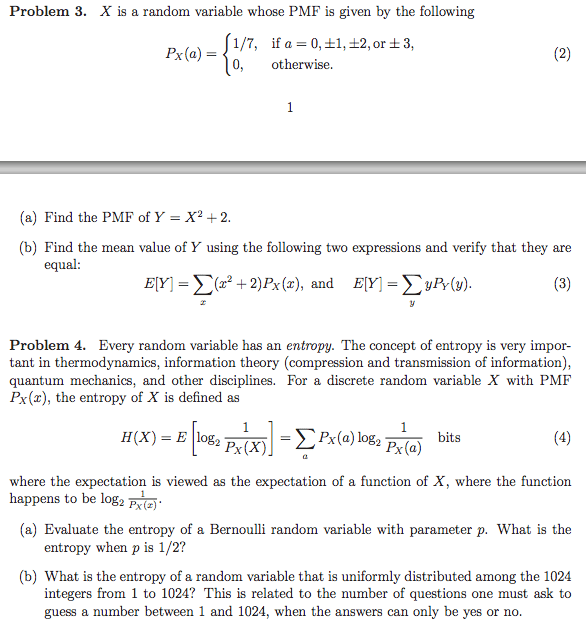

X is a random variable whose PMF is given by the following Px(a) = Find the PMF of Y = X2 + 2 Find the mean value of Y using the following two expressions and verify that they are equal: E[Y] = Every random variable has an entropy. The concept of entropy is very important in thermodynamics, information theory (compression and transmission of information), quantum mechanics, and other disciplines. For a discrete random variable X with PMF Px(x), the entropy of X is defined as H(X) = where the expectation is viewed as the expectation of a function of X, where the function happens to be Evaluate the entropy of a Bernoulli random variable with parameter p. What is the entropy when p is 1/2? What is the entropy of a random variable that is uniformly distributed among the 1024 integers from 1 to 1024? This is related to the number of questions one must ask to guess a number between 1 and 1024, when the answers can only be yes or no.

Show transcribed image text X is a random variable whose PMF is given by the following Px(a) = Find the PMF of Y = X2 + 2 Find the mean value of Y using the following two expressions and verify that they are equal: E[Y] = Every random variable has an entropy. The concept of entropy is very important in thermodynamics, information theory (compression and transmission of information), quantum mechanics, and other disciplines. For a discrete random variable X with PMF Px(x), the entropy of X is defined as H(X) = where the expectation is viewed as the expectation of a function of X, where the function happens to be Evaluate the entropy of a Bernoulli random variable with parameter p. What is the entropy when p is 1/2? What is the entropy of a random variable that is uniformly distributed among the 1024 integers from 1 to 1024? This is related to the number of questions one must ask to guess a number between 1 and 1024, when the answers can only be yes or no.