I would like to prove the last part (d).

Thank you

42 Metric Spaces Proof. The proof is somewhat lengthy but straightforward. We subdivide it into four steps (a) to (d). We construct (a) X=(X, d) (b) an isometry T of X onto W, where W Then we prove: (c) completeness of X. (d) uniqueness of X, except for isometries. Roughly speaking, our task will be the assignment of suitable limits to Cauchy sequences in X that do not converge. However, we should not introduce "too many" limits, but take into account that certain se- quences "may want to converge with the same limit" since the terms o those sequences "ultimately come arbitrarily close to each other intuitive ide " This a can be expressed mathematically in terms of a suitable equivalence relation [see (1), below). This is not artificial but is suggested by the process of completion of the rational line mentioned at the beginning of the section. The details of the proof are as follows. X-(X, d). (%) and (x) be (a) Construction of Let Cauchy sequences in Х. Define (4) to be equivalente, to (x), written Let X be the set of all equivalence classes,of Cauchy sequences thus obtained wewrite.(%)eito mean that (x.) is a member of i (a representative of the class , we now set where (t)e i and (y)e y. We show that this limit exists. We have hence we obtain and a similar inequality with m and n interchanged. Together, 1e review of the concept of equivalence, see Al 4 in Appendix 1

1.6 Completion of Metric Spaces 43 Since (%) and (%) are Cauchy, we can make the right side as small as we please. This implies that the limit in (2) exists because Ris complete. We must also show that the limit in (2) is independent of the particular choice of representatives. In fact, if ( )-(x) and (%)-(y"), then by (1), as n-a, which implies the assertion We prove that d in (2) is a metric on X. Obviously, d satisfies (MI) in Sec. 1.1 as well as d(i,i)s0 and (M3). Furthermore, gives (M2), and (M4) for d follows from TU ) by letting n-00, with (b) Construction of an isometry T: X-Wc each bex we associate the class bex which contains the constant Cauchy sequence (b, b,). This defines a mapping T X_W onto the subspace W= TX) ,. The mapping T is given by b-â b= T, where (b, b,.Jeb. We see that T is an isometry since (2) becomes simply ã¡ here é is the class of (ya) where y- c for all n. Any isometry is injective, and T: X-â W is surjective since T(X)=w. Hence W and X are isometric; cf. Def. 1.6-1(b). We show that W is dense in X. We consider any iex. Let ()e i. For every e>0 there is an N such that in> N)

Let (ì¯ì¤-k h. Then This shows that every s-neighborhood of the arbitrary i e X contains an element of W. Hence W is dense in X. (c) Completeness of X. Let () be any Cauchy sequence in X. Since W is dense in X, for every there is a &ne W such that Pl Hence by the triangle inequality and this is less than any given ε>0 for sufficiently large m and n because (辶) is Cauchy. Hence (2-) is Cauchy. Since T: X-w is isometric and i W, the sequence (z), where zm-Tim, is Cauchy in X. Let te X be the class to which (.) belongs. We show that i is the limit of () By (4) rt Since (m)e i (see right before) and i e W, so that (2 the inequality (5) becomes ei nm to and the right side is smaller than any given e>0 for sufficiently large n. Hence the arbitrary Cauchy sequence (L) in X has the limit ieX, and X is complete.

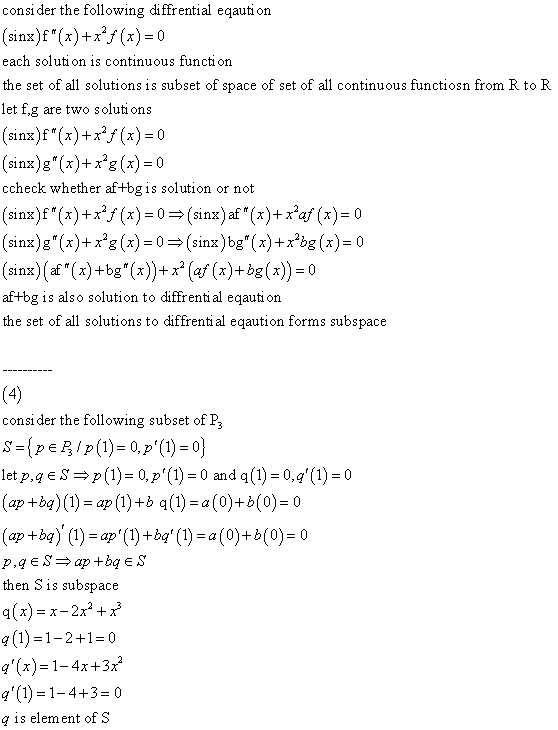

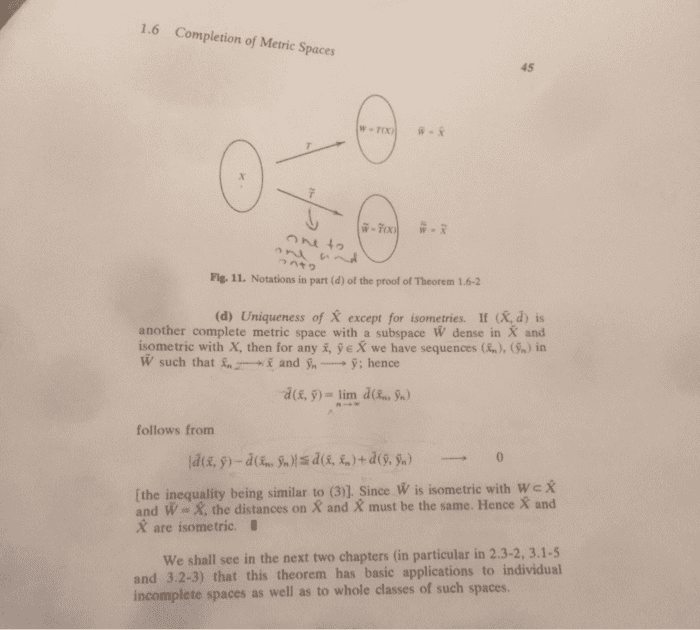

1.6 Completion of Metric Spaces 45 ã£n+㯠Fig. 11. Notations in part (d) of the proof of Theorem 1.6-2 (d) Uniqueness of X except for isometries. If (X, d) is another complete metric space with a subspace W dense in X and isometric with X, then for any i, fe X we have sequences ( ), (SJ in W such thatn and -y; hence follows from [the inequality being similar to (3)1. Since W is isometric with We X and W-X, the distances on X and X must be the same. Hence X and X are isometric. We shall see in the next two chapters (in particular in 2.3-2, 3.1- and 3.2-3) that this theorem has basic applications to individual incomplete spaces as well as to whole classes of such spaces.